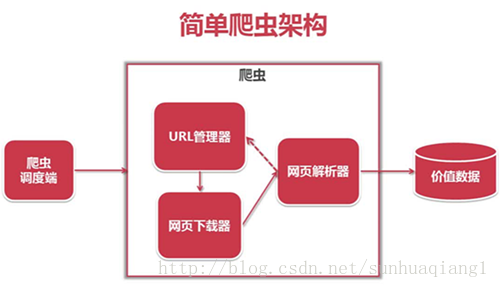

讲解Python的Scrapy爬虫框架使用代理进行采集的方法

1.在Scrapy工程下新建“middlewares.py”

# Importing base64 library because we'll need it ONLY in case if the proxy we are going to use requires authentication import base64 # Start your middleware class class ProxyMiddleware(object): # overwrite process request def process_request(self, request, spider): # Set the location of the proxy request.meta['proxy'] = "http://YOUR_PROXY_IP:PORT" # Use the following lines if your proxy requires authentication proxy_user_pass = "USERNAME:PASSWORD" # setup basic authentication for the proxy encoded_user_pass = base64.encodestring(proxy_user_pass) request.headers['Proxy-Authorization'] = 'Basic ' + encoded_user_pass

2.在项目配置文件里(./project_name/settings.py)添加

DOWNLOADER_MIDDLEWARES = {

'scrapy.contrib.downloadermiddleware.httpproxy.HttpProxyMiddleware': 110,

'project_name.middlewares.ProxyMiddleware': 100,

}

只要两步,现在请求就是通过代理的了。测试一下^_^

from scrapy.spider import BaseSpider

from scrapy.contrib.spiders import CrawlSpider, Rule

from scrapy.http import Request

class TestSpider(CrawlSpider):

name = "test"

domain_name = "whatismyip.com"

# The following url is subject to change, you can get the last updated one from here :

# http://www.whatismyip.com/faq/automation.asp

start_urls = ["http://xujian.info"]

def parse(self, response):

open('test.html', 'wb').write(response.body)

3.使用随机user-agent

默认情况下scrapy采集时只能使用一种user-agent,这样容易被网站屏蔽,下面的代码可以从预先定义的user- agent的列表中随机选择一个来采集不同的页面

在settings.py中添加以下代码

DOWNLOADER_MIDDLEWARES = {

'scrapy.contrib.downloadermiddleware.useragent.UserAgentMiddleware' : None,

'Crawler.comm.rotate_useragent.RotateUserAgentMiddleware' :400

}

注意: Crawler; 是你项目的名字 ,通过它是一个目录的名称 下面是蜘蛛的代码

#!/usr/bin/python

#-*-coding:utf-8-*-

import random

from scrapy.contrib.downloadermiddleware.useragent import UserAgentMiddleware

class RotateUserAgentMiddleware(UserAgentMiddleware):

def __init__(self, user_agent=''):

self.user_agent = user_agent

def process_request(self, request, spider):

#这句话用于随机选择user-agent

ua = random.choice(self.user_agent_list)

if ua:

request.headers.setdefault('User-Agent', ua)

#the default user_agent_list composes chrome,I E,firefox,Mozilla,opera,netscape

#for more user agent strings,you can find it in http://www.useragentstring.com/pages/useragentstring.php

user_agent_list = [\

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/22.0.1207.1 Safari/537.1"\

"Mozilla/5.0 (X11; CrOS i686 2268.111.0) AppleWebKit/536.11 (KHTML, like Gecko) Chrome/20.0.1132.57 Safari/536.11",\

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.6 (KHTML, like Gecko) Chrome/20.0.1092.0 Safari/536.6",\

"Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.6 (KHTML, like Gecko) Chrome/20.0.1090.0 Safari/536.6",\

"Mozilla/5.0 (Windows NT 6.2; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/19.77.34.5 Safari/537.1",\

"Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/536.5 (KHTML, like Gecko) Chrome/19.0.1084.9 Safari/536.5",\

"Mozilla/5.0 (Windows NT 6.0) AppleWebKit/536.5 (KHTML, like Gecko) Chrome/19.0.1084.36 Safari/536.5",\

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1063.0 Safari/536.3",\

"Mozilla/5.0 (Windows NT 5.1) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1063.0 Safari/536.3",\

"Mozilla/5.0 (Macintosh; Intel Mac OS X 10_8_0) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1063.0 Safari/536.3",\

"Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1062.0 Safari/536.3",\

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1062.0 Safari/536.3",\

"Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1061.1 Safari/536.3",\

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1061.1 Safari/536.3",\

"Mozilla/5.0 (Windows NT 6.1) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1061.1 Safari/536.3",\

"Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1061.0 Safari/536.3",\

"Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/535.24 (KHTML, like Gecko) Chrome/19.0.1055.1 Safari/535.24",\

"Mozilla/5.0 (Windows NT 6.2; WOW64) AppleWebKit/535.24 (KHTML, like Gecko) Chrome/19.0.1055.1 Safari/535.24"

]